|

11/21/2023 0 Comments Airflow scheduler no heartbeat

python_operator import BranchPythonOperator from airflow. Scheduled tasks will still show up as failed in GUI and in database. While using sequential executor with SQLite DB we often see Airflow scheduler periodically complaining about no heartbeat in WEB UI. For more details see Apache Airflow Executors. Restart Airflow scheduler, copy DAGs back to folders and wait. Local Executor can be used for a single production Airflow system and can utilize all the resources of a given host system. In most cases this just means that the task will probably be scheduled soon.

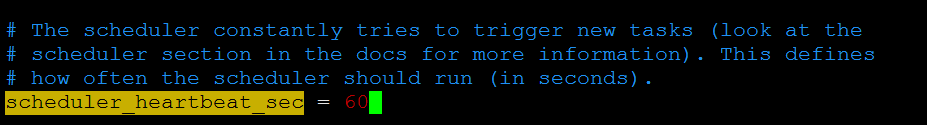

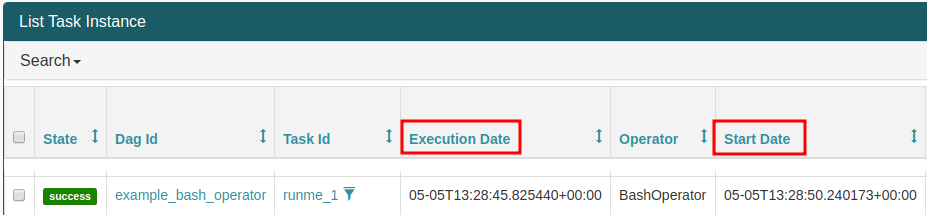

For the tasks that are not running are showing in queued state (grey icon) when hovering over the task icon operator is null and task details says: All dependencies are met but the task instance is not running. bash_operator import BashOperator from airflow. Steps undertaken: Stop Airflow scheduler, delete all DAGs and their logs from folders and in database. There are 4 scheduler threads and 4 Celery worker tasks. dummy_operator import DummyOperator from airflow. We also checked server resources for those cases - but there are a lot of free RAM and CPU in that time, so it shoudn't be the cause.įrom airflow. the heartbeat LED is not flashing, see table 1 () for. This how LocalExecutor should work according to documentation. no connector can be installed in the wrong location. Database - Contains information about the status of tasks, DAGs, Variables, connections, etc. Web server - HTTP Server provides access to DAG/task status information. Scheduler - Responsible for adding the necessary tasks to the queue. Share Improve this answer Follow answered at 18:15 Rick Giuly 963 1 13 19 Yep. Airflow consist of several components: Workers - Execute the assigned tasks. (2) Switch from SequentialExecutor to LocalExecutor. We expect all other DAGs to start according to their schedule when long-running task is running. 2 Answers Sorted by: 2 This fixed the problem: (1) Use postgresql instead of sqlite. Manual triggering also doesn't help - triggered tasks are not started until long-running task is finished. Java DevOps APEX Scripts Apache Airflow Schedule: The scheduler does not appear to be running. But with long-running task there are no log messages like this. When there is no long-running task running we see that scheduler tries to check whether any task could run and check parallelism/concurrency limitation for them. As part of Apache Airflow 2.0, a key area of focus has been on the Airflow Scheduler.The Airflow Scheduler reads the data pipelines represented as Directed Acyclic Graphs (DAGs), schedules the contained tasks, monitors the task execution, and then triggers the downstream tasks once their dependencies are met. We examined Airflow scheduler logs and figured out that scheduler just doesn't try to grab new tasks while long-running task is running. The DAGs list may not update, and new tasks will not be scheduled. Last heartbeat was received XX minutes ago. I don't even know what these processes were, as I can't really see them after they are killed.The scheduler does not appear to be running. Also tried ‘pkill -f airflow’ and restarted both the scheduler and the webserver, but still the issue is there. Airflow Scheduler has to be restarted frequently while running DAGs. The DAGs list may not be updated, and new tasks will not be scheduled. Last heartbeat was received was 32 seconds ago. As in: airflow trigger_dag DAG_NAMEĪfter waiting for it to finish killing whatever processes he is killing, he starts executing all of the tasks properly. The scheduler does not appear to be running. It seems to be happening only after I'm triggering a large number (>100) of DAGs at about the same time using external triggering. I'm using a LocalExecutor with a PostgreSQL backend DB. Learn how to use the secret key for an Apache Airflow variable ( test-variable) in Using a secret key in AWS Secrets Manager for an Apache Airflow variable. These are the logs the scheduler prints: WARNING - Killing PID 42177 We recommend the following steps: Learn how to create secret keys for your Apache Airflow connection and variables in Configuring an Apache Airflow connection using a Secrets Manager secret. I'm testing the use of Airflow, and after triggering a (seemingly) large number of DAGs at the same time, it seems to just fail to schedule anything and starts killing processes.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed